How would you answer "Why does a CPU throttle?"

Last night, a university junior posted a short question on Slack.

Why does a CPU throttle?

I saw the question and immediately replied. A straightforward, almost too simple answer.

Because it needs more CPU than what’s been provided?

I was then preparing a more detailed follow-up.

Then, another friend came up with a different answer.

The faster the computation, the more heat it generates. If it gets too hot, it’ll melt — so it slows down.

My mind went blank for a moment.

I had answered why throttling occurs, while this friend answered why throttling needs to exist.

Same question, completely different interpretations.

One question, six perspectives

“Why does a CPU throttle?”

If you received this one-liner, how would you answer?

Think about it for a moment — a surprisingly wide range of intentions could be hiding behind this question.

- Operational — My server is experiencing CPU throttling right now. What’s causing it?

- Comparative — Memory hits OOM, but why does CPU use throttling instead?

- Fundamental — Why does the concept of throttling exist in CPUs at all? What’s its origin?

- Conditional — Under what conditions does throttling generally happen? Quota exceeded? Contention?

- Implementation — How is throttling actually implemented? (e.g. CFS quota, cgroup, …)

- Optimization — When throttling occurs, how do you fix or avoid it?

I had read it in the most straightforward way — number 4. My friend read it as number 3.

My second answer

I continued with a more detailed answer from perspective 2.

Memory is fundamentally about occupying space. It’s like putting things into a limited container — once it’s full, you can’t allocate any more. That’s why when memory hits its limit, it throws an OOM (Out of Memory) error and kills the process entirely.

But CPU is more about time than space. It’s a resource where each process takes turns consuming time. No single process owns it — multiple processes share it through time slicing. In other words, CPU is inherently a shareable resource.

That’s why the concept of throttling makes sense. When a process exceeds its allocated CPU quota, instead of killing it like OOM, you can just pause its execution and say “wait for your next turn.”

Memory is space, CPU is time. This difference creates two fundamentally different limiting mechanisms: OOM and throttling. My answer seemed to be appreciated (though I’m not sure if it fully answered the question).

The remaining question

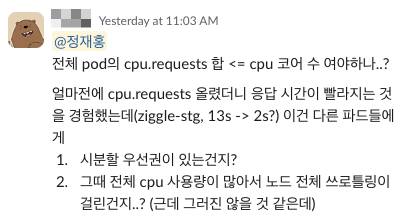

Right after the thank-you, another question followed from yet another perspective.

Should the sum of all pod cpu.requests be <= the number of CPU cores? I recently raised cpu.requests and response times got faster. Is this because:

- There’s a time-slicing priority?

- Overall CPU usage was high at the time, causing node-wide throttling?

This time it was a concrete question about CPU scheduling in Kubernetes. I shared a LY Corporation (formerly LINE) article on efficient CPU usage in Kubernetes that I had read before, along with an explanation of pod scheduling, CPU requests, and time-slicing priority.

Takeaway

It’s a simple question, when you think about it. But precisely because it’s so simple, understanding the intent behind it — what answer they’re looking for, what background knowledge they have — becomes all the more important.

A single question can yield vastly different answers, and those answers shift entirely depending on the asker’s intent and context.

I could have just asked “could you elaborate?” right away. But wondering what this friend was really curious about when asking about CPU throttling last night, and thinking through how I could best answer that question — that process itself was the most fun part.